Co-existing with AI without being left behind

With a growing pressure on engineers made by AI coding hype, organization needs to avoid potential pitfalls and steering toward the correct mindset instead of unsafe adoption.

In the current time, we need to use AI tools to our advantage to remain ahead initially, but eventually remain relevant in all. However, eagerness to offload our work to AI could also damage our capacity to grow as engineers, ensure quality work, and work together as a team.

The AI rush

The rush for AI and the adoption of AI coding tools is real. Last March, Anthropic’s CEO, Dario Amodei, declared

“I think we will be there in three to six months, where AI is writing 90% of the code. And then, in 12 months, we may be in a world where AI is writing essentially all the code”.

Since then, coding and agentic tools have exploded, and their usage is encouraged across many industries.

A survey by the Pragmatic Engineer [src] found that 95% of respondents report using AI tools at least weekly, 75% use AI for half or more of their work, and 56% report doing 70%+ of their engineering work with AI.

There is no doubt that we need to use and test these tools. Especially learning how to use them efficiently with trial and error loops. The most important thing is not to pick the right one but to have an open mindset to what’s coming.

Tools have been proliferating particularly for:

Prototyping for a technical or non-technical audience.

Refactoring, which can increasingly be done on a larger codebase, especially if a test suite exists.

Code Reviews

It also makes engineers be

Being more ambitious in the software we develop

Being faster to pick up new languages

And as a success story emerges, the expectation is now to be able to deliver faster with the same quality, delivering more at the same pace, or both. This latter one shows the danger zone where the rate of generation outpaces our rate of comprehension, leading to an understanding deficit

Not trying the tool is a failure, but not using it wisely is an even bigger failure.

The erosion of the system thinking

Complexity has always been the enemy of software. Small flaws and hot fixes accumulate and jeopardize the overall software design. AI makes it even more dangerous, as engineers may lose track of what AI generates and what the overall software design is. Not only do the small flaws still exist, but they can become out of control without being noticed, or when it is already too late.

To prevent the area where the AI tool needs to be used consciously, when:

Reading the code

Generating the code

Debugging the code

Misuse of AI tooling leads to poorer architecture quality and, therefore, higher debugging and maintenance costs.

Margaret-Anne Storey recently labeled it as “cognitive debt”, inspired by the original term “technical debt”. In her article, she shares an anecdote about her student:

“But by weeks 7 or 8, one team hit a wall. They could no longer make even simple changes without breaking something unexpected. When I met with them, the team initially blamed technical debt: messy code, poor architecture, hurried implementations. But as we dug deeper, the real problem emerged: no one on the team could explain why certain design decisions had been made or how different parts of the system were supposed to work together. The code might have been messy, but the bigger issue was that the theory of the system, their shared understanding, had fragmented or disappeared entirely. They had accumulated cognitive debt faster than technical debt, and it paralyzed them.”

As AI tools take control, the codebase that grows in a manner that the team is less and less familiar with will only lead to slower development in the long term. If no one can understand the succession of design decisions made, it is difficult to understand when a component has scope creep, shares too many responsibilities, and to make simple changes without breaking the codebase.

The question is how much work we should delegate to the AI to achieve fast and high-quality delivery.

Ultimately, as individual engineers, we are the only ones responsible for what we build and for growing our own skills. In the past, senior engineers would be guarantors of both by reviewing the code from junior engineers and maintaining unit and integration test suites to protect the codebase and grow talent in the company.

Now, AI tools make code generation a commodity, and the number of lines of code in one PR explodes. Of course, no one is able to review it thoroughly. The bottleneck shifts from generating the code to reviewing the code. The time saved on generation by one person is pushed to all the teammates who review it.

A historical hidden key metric of success for a team is the codebase theory and design clarity.

When a new engineer joins the team, we treat the engineer effectively once the first PR has been submitted. This is a sign that the new joiner understood the fundamentals of the codebase.

When the codebase is well understood, the developers can accurately estimate the length of the task, and the program management can better understand the timeline.

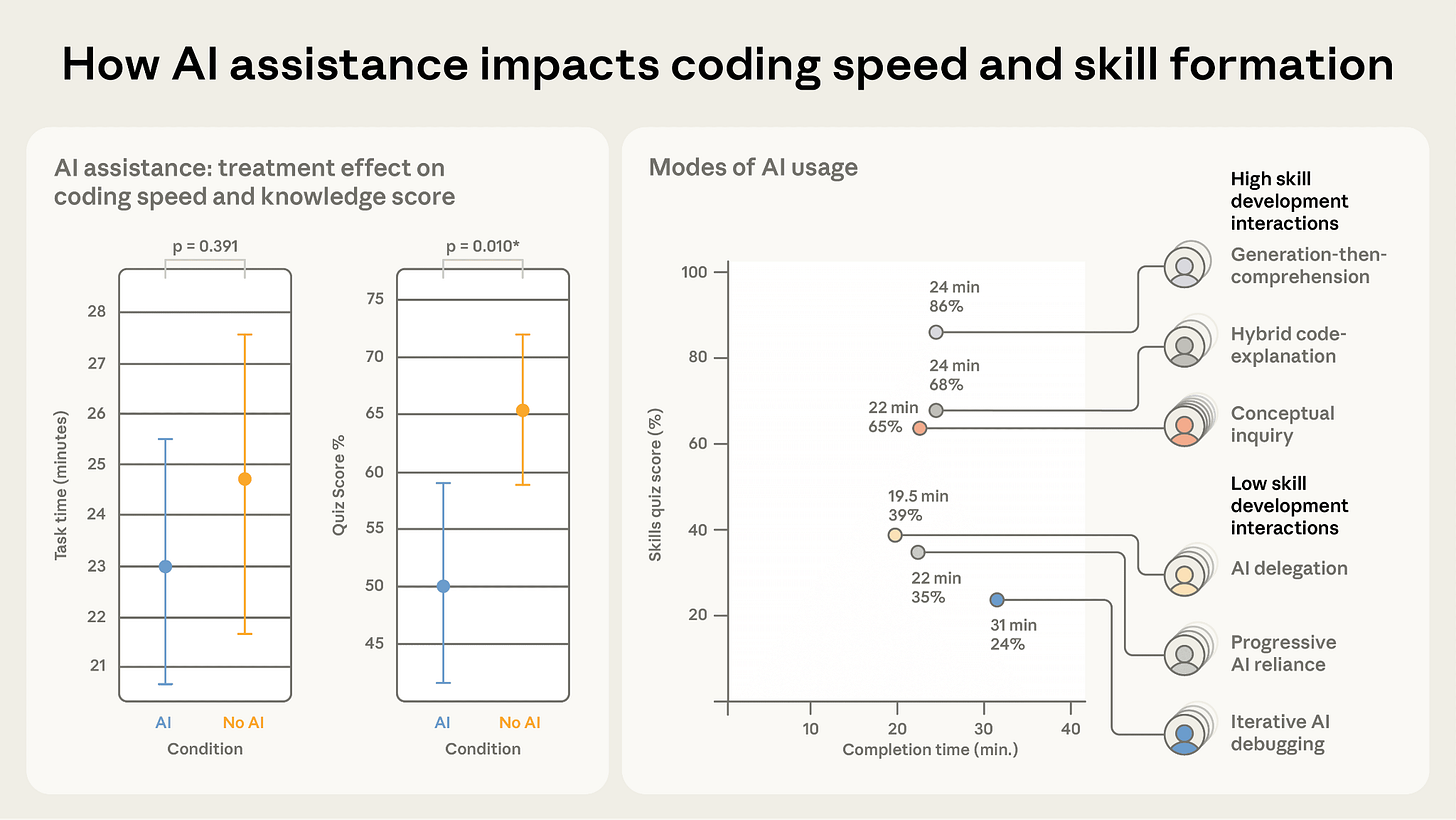

The issue is that delegating code generation to AI tooling decreases the comprehension of the codebase. To think about the problem before looking for the solution is a fundamental principle of comprehension. Anthropic collected actual data about how offloading cognitive tasks to AI coding tools could hamper skills growth [src].

As a team, we are responsible for respecting the camping rule; we need to leave the camping cleaner after usage than it was before we arrived. AI-enhanced coding is the ‘arrival of plastic’ in our digital ecosystem. It provides immense utility and convenience, but without a disciplined ‘carry-in, carry-out’ policy, we risk polluting our codebase with non-biodegradable logic. To survive this, we must introduce strategic friction: moments where we deliberately slow down to ensure the ‘theory of the system’ remains resident in our collective memory.

Strategic Friction: Where to delegate?

Estimating how long a feature takes in an AI world isn’t just about how fast we can generate code; it’s about how much of that code we actually understand. We can think of the total effort as:

Total Effort=(Context×History)+(Design×Decomposition)+(Validation×Socialization)

When we offload “Design” or “Validation” to an AI without real oversight, we aren’t just saving time—we are eroding our understanding of the project. We stop learning the context and design that make a system work.

Even if an AI helps write your test suites or refactor your code, a human still needs to do the peer review. This isn’t just about catching bugs; it’s about making sure the “theory of the system” stays in our heads. If we don’t understand how the pieces fit together today, we won’t be able to predict how long the next feature will take tomorrow.

As of today, it is not safe to delegate the engineering flow or skip one of these steps in our routine without injecting and retrieving key human understanding. It is only safe to guide the AI through these steps and make sure the context is provided, in a prompt or a managed file structure. The hard work is still ours.

Developer velocity should be measured in the long term. As the codebase grows, is the team adding new features faster? Is the product more reliable and robust? Or are we slower and less confident about the code?

Ultimately, the metric of success hasn’t changed: if the codebase is not more robust and the engineer is not more skilled than they were yesterday, the tool—no matter how fast—has failed us. Eventually, the human is the one responsible for catching bugs, reading code, writing code, and understanding the code design.

What is the skill of the engineer of the future, anything that would deliver faster and higher quality of the requested feature? As we increasingly delegate coding and all the surrounding activities, it becomes essential that we do the upstream and downstream work well.

Make sure you are still in power with:

Architectural Sovereignty: Can you discern the right problem and explain how the generated solution integrates into the codebase theory?

Cognitive Sovereignty: Can you solve the problem mentally before the prompt is even written?

Meta-Sovereignty: Are you treating your workflow as a product that requires constant refactoring and learning?

As code generation becomes a commodity, the value of an engineer shifts from the ability to produce to the ability to discern. Speed is a vanity metric if it leaves us stranded in a codebase we no longer recognize. The goal is not just to build faster, but to build with durability and growth in mind.